If customers can’t immediately find what they’re looking for in an online shop, they’re gone faster than you can say “conversion rate.”

The default search? Fine for basic queries but it breaks down the moment users start describing products in their own words.

The solution: a RAG-based semantic search that understands what customers mean, not just what they type. Combined with OpenAI and Pinecone, your Magento search transforms from a source of frustration into a powerful revenue engine.

What Makes Semantic Search So Powerful?

The key difference compared to traditional search is that it aims to understand what customers are actually looking for. This makes the search experience far more flexible and intuitive. Instead of simply checking whether a search term appears exactly in product names or descriptions, semantic search focuses on meaning and user intent.

What does that look like in practice? Imagine a customer searching for “comfortable shoes for long-distance running.” A classic keyword-based search may fail if that exact phrasing does not appear in the product data. Semantic search, however, recognizes that “long-distance running” typically refers to marathon or road running, and that “comfortable” in this context implies good cushioning and support.

Based on this understanding, the system delivers relevant running shoes, even if the product descriptions use different wording. This deeper understanding reduces search abandonment, improves result accuracy, and enables customers to find products the way they naturally search for them.

What Is Retrieval-Augmented Generation (RAG)?

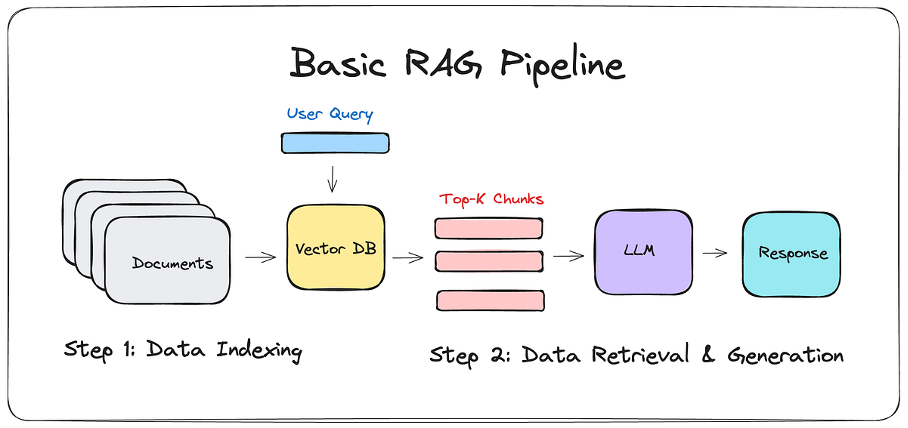

Retrieval-Augmented Generation (RAG) is an AI pattern that combines search and text generation. Instead of generating answers solely based on its training data, RAG ensures that the AI works with real, up-to-date data from your Magento store.

The process is clearly structured:

First, relevant products or content are retrieved from Magento using semantic search. These results are then passed to a language model, which generates a response based on that information.

Because the model operates exclusively on the previously retrieved Magento data, the results are more precise, transparent, and significantly more reliable.

In Magento, RAG can be used to explain search results, recommend suitable products, or answer customer questions in a natural and context-aware manner.

RAG-Based Semantic Search in Magento

Instead of guessing keywords, customers search the way they think and speak. This reduces frustration, feels intuitive, and works especially well on mobile. Customers no longer have to play “search engine bingo,” and even less tech-savvy users can more easily find the product they’re looking for.

It also pays off—literally—for the business. Better matches mean products are found faster, sessions last longer, and conversion rates increase. At the same time, RAG can answer many standard questions directly through search or chat, reducing support workload before a ticket is even created.

In short: less frustration for users, more revenue for the business.

Core Functionality of the System

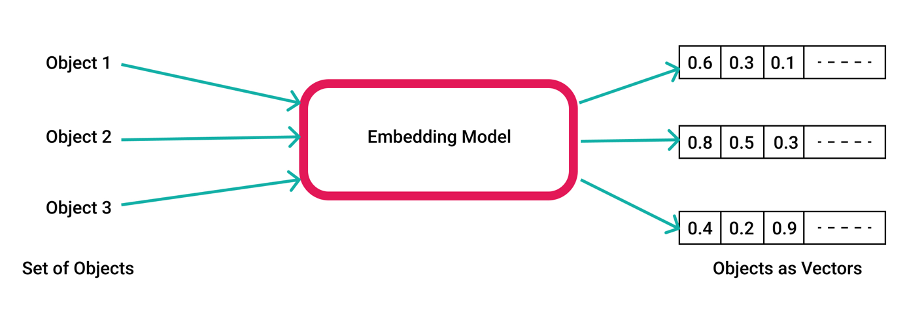

At its core, the system works by translating Magento content into a format that machines can understand and compare. Product descriptions, CMS pages, and other content are converted into numerical representations known as embeddings. Rather than merely processing words, embeddings capture the underlying meaning of a text.

When a search is performed, the query is also transformed into an embedding and matched against a vector database. The most relevant results are returned and, if needed, consolidated into a clear and natural response by a language model.

In this way, text becomes meaning and meaning becomes relevant results.

Preparing Magento Data

The first technical step is to collect text data from Magento. This includes product names, descriptions, attributes, category texts, CMS pages, and FAQ content—in short, everything that provides context and meaning to the products.

The goal is to capture as much meaningful information as possible. For each product, the relevant texts are consolidated into a clean, structured text block. For example, the name, description, and key attributes are combined. This text later represents the “meaning” of the product for semantic search.

As always, the quality of the preparation directly impacts the results. Semantic search is only as good as the texts it is built on.

Creating Embeddings with OpenAI

Once the Magento data has been prepared, the next step is generating embeddings. The texts are sent to OpenAI, which converts them into numerical vectors that represent the meaning of the content. Texts that are semantically similar are positioned close to each other in vector space.

This is where the real advantage lies. The system compares meaning rather than keywords. Each product, CMS page, and FAQ entry receives its own embedding and is stored for future search queries. These vectors are only recalculated when content changes, resulting in an efficient system that is well suited even for large product catalogs.

Storing Embeddings in Pinecone

Once the embeddings have been created, they are stored in Pinecone. Pinecone is a vector database specifically designed for fast and precise similarity search. It enables the system to identify content that semantically matches a search query within milliseconds.

In addition to embeddings, Pinecone also stores metadata such as product IDs, SKUs, or categories. This information is essential for filtering results and seamlessly linking them back to Magento. Even with very large datasets, Pinecone delivers results in milliseconds.

Semantic Search in Action

When customers search in the Magento store, their query is processed in the same way as the product data. The text is converted into an embedding, which is then sent to Pinecone and compared with the stored vectors. The content whose meaning is closest to the search query is retrieved — quickly, precisely, and independently of the exact words used.

The system retrieves the appropriate products or content. Because the search is based on meaning rather than keywords, the results are typically far more relevant than those generated by traditional search mechanisms and independent of perfectly phrased queries. The search understands the intent behind the request and delivers exactly that.

RAG for Helpful Answers

Move beyond simple product listings. Once relevant products or information have been identified, the data is passed to a language model to generate a natural, customer-friendly response.

In the running shoe example, this means the system doesn’t just display a product list—it can also explain which product is best suited for marathon training and why. This creates a more personalized, interactive shopping experience and helps customers make informed decisions.

Whether it’s AI-powered search summaries, chatbots, or guided product selection, the approach works wherever search is meant to be more than a list of results. Instead of lists, customers receive genuine recommendations.

Conclusion

RAG-based semantic search takes Magento to the next level.

Keyword guessing becomes true understanding. Customers search the way they think—and, through the combination of Magento data, OpenAI language models, and Pinecone vector search, receive relevant results and clear explanations.

The result: less frustration, better decisions, and more conversions. Magento search evolves from a simple search box into an intelligent shopping assistant that delights users while driving business goals.

Contact us to learn more about integrating RAG-based semantic search into your Magento store.